AI That Does Something, Not Just Demos

Most AI projects stall at the demo stage. The prototype works in a notebook, but nobody can figure out how to make it reliable, fast, and affordable in production. I build AI-powered applications that handle real traffic, recover from API failures, stay within budget, and deliver consistent results. These are production systems with proper error handling, not chatbot demos.

I work with Claude (Anthropic) and GPT-4 (OpenAI) through their TypeScript SDKs, using AI pair programming techniques daily to accelerate development. Every integration ships with retry logic, response validation, cost tracking, and fallback behavior. When the AI API is slow or returns unexpected output, the application handles it gracefully instead of crashing.

What I Build with LLMs

The most valuable AI applications automate work that currently requires a person to read, interpret, and act on unstructured information. Content generation pipelines that match your brand voice. Document analysis tools that extract structured data from contracts, invoices, and emails. Intelligent search that understands what users mean, not just what they type. Customer support automation that handles routine questions and escalates complex ones.

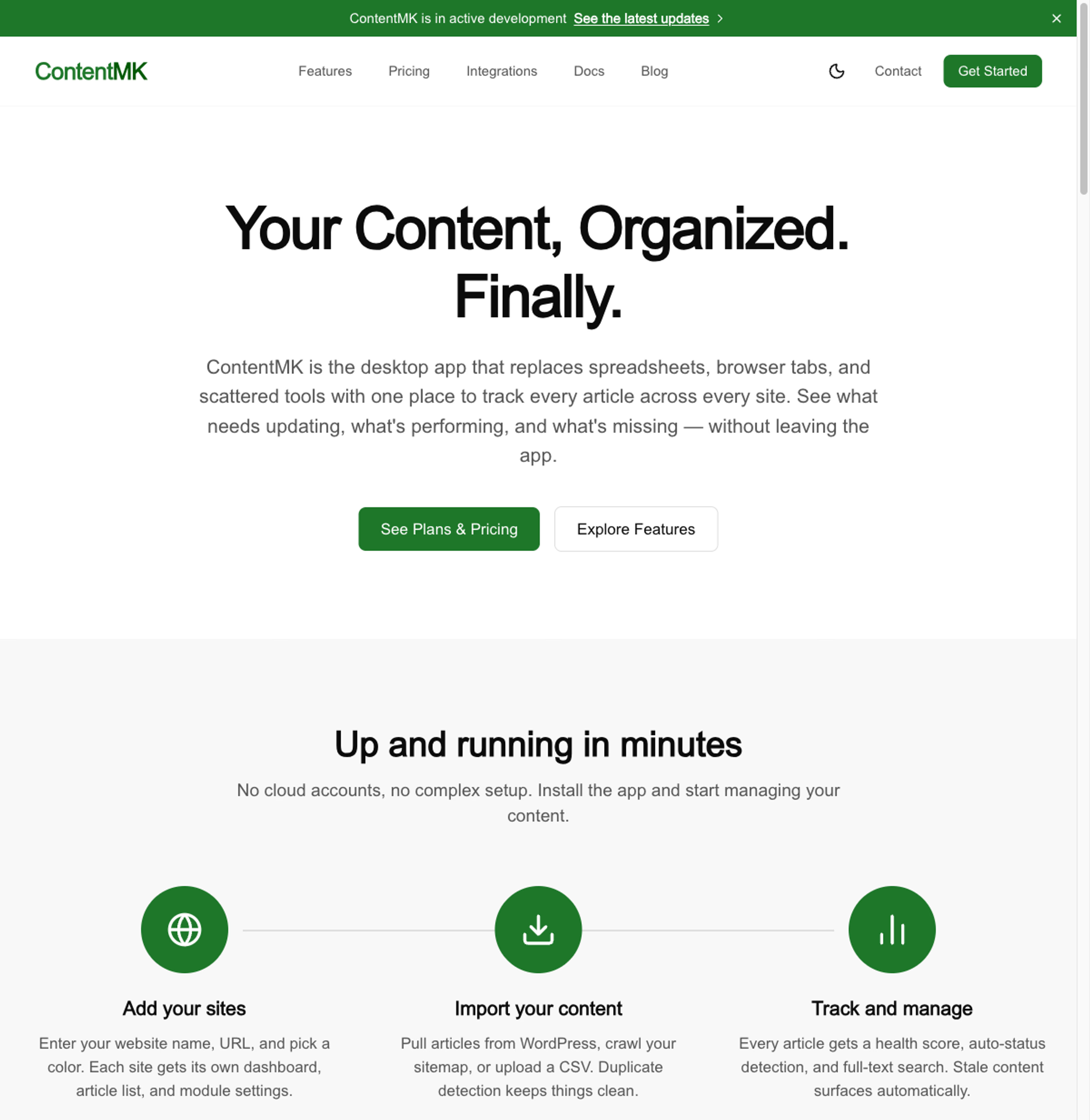

ContentMK integrates AI with per-workspace access controls and configuration. Each workspace can use different models, different system prompts, and different rate limits. The AI layer plugs into the content management workflow without replacing it. That is the pattern: AI as a tool inside a larger system, not AI as the entire product. I explore this approach further in my piece on vibe coding in professional development.

MCP Servers: Connecting AI to Your Data

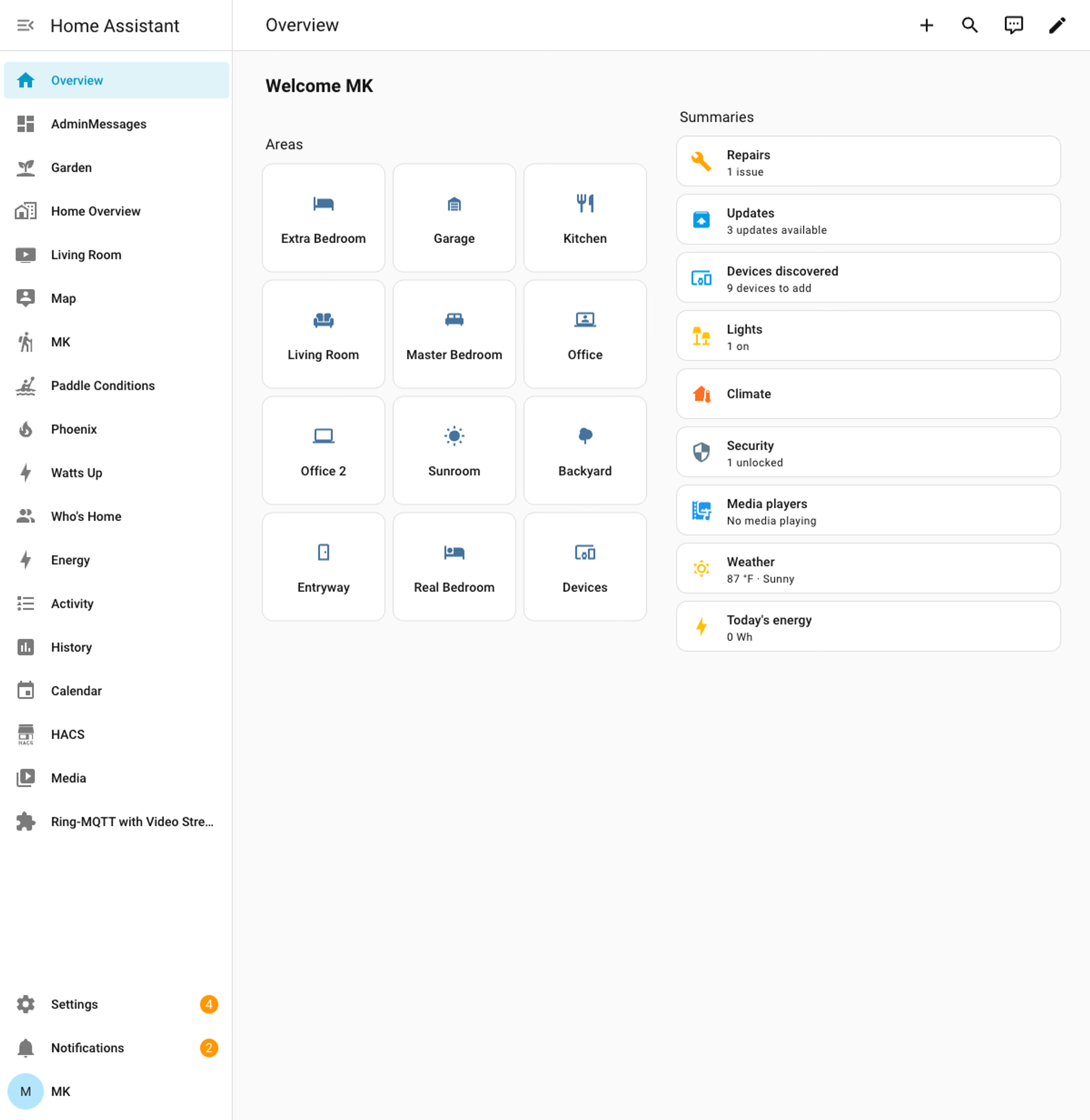

MCP (Model Context Protocol) is the emerging standard for connecting AI assistants to external data sources. I build custom MCP servers that give Claude and other AI tools structured access to your databases, APIs, and business systems. Instead of copying data into chat windows, the AI queries your systems directly through typed tool interfaces.

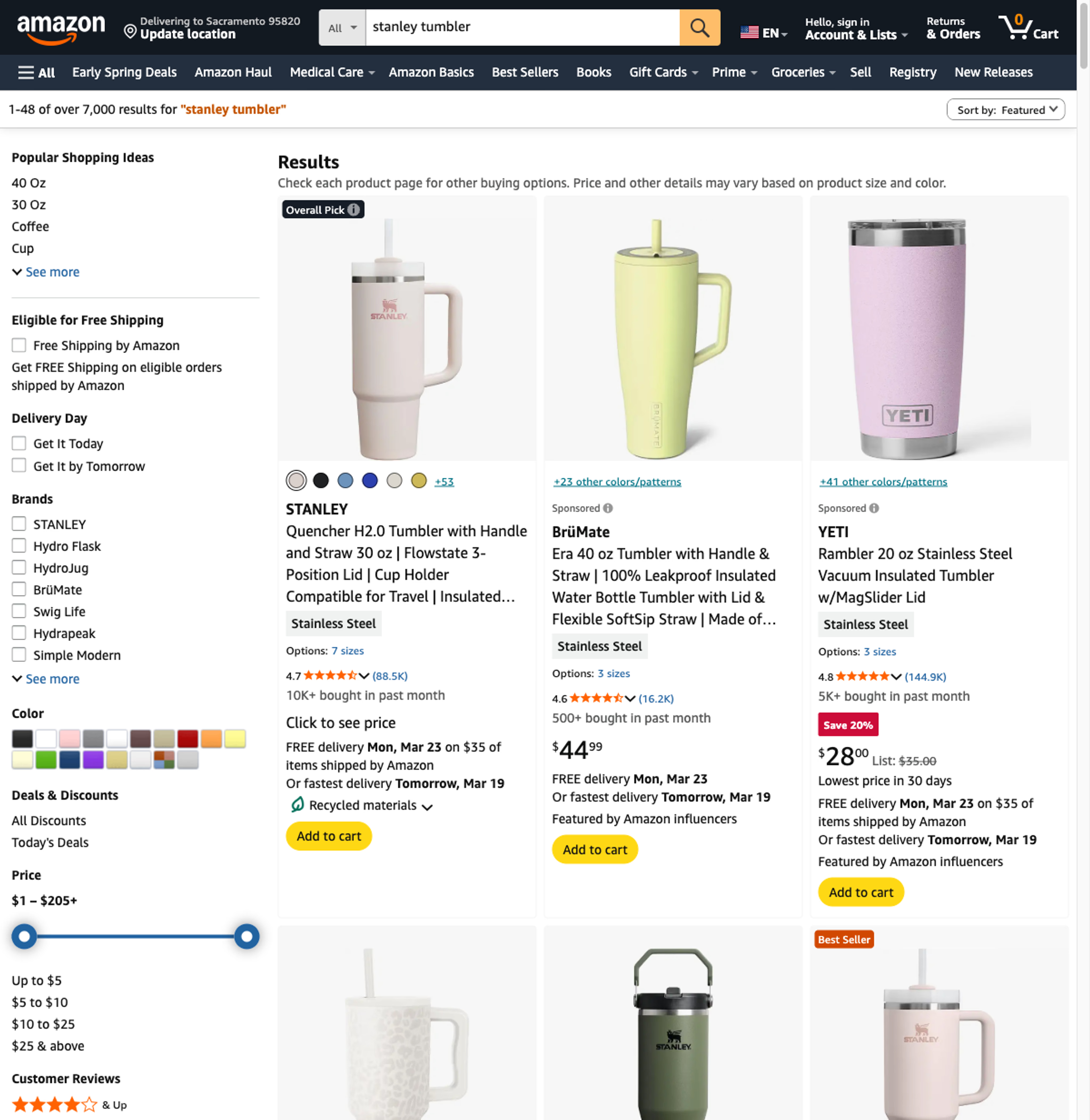

Amazon Creators API is an MCP server I built that wraps the Amazon Product Advertising API. It gives AI assistants access to product search, pricing, and catalog data through clean tool definitions. The same architecture works for any API or database you want to expose to AI workflows.

All of this work starts with the development environment. I use an AI Architect approach to set up custom Claude Code skills, hooks, and MCP servers matched to your codebase before building AI features on top of it.

Cost Control That Scales

LLM API costs can spiral fast. A single poorly designed prompt hitting GPT-4 in a loop can cost hundreds of dollars in an afternoon. I implement three layers of cost control on every AI project. First, prompt engineering that minimizes token usage without sacrificing output quality. Second, intelligent caching that avoids redundant API calls for similar inputs. Third, model routing that sends simple tasks to cheaper models and reserves premium models for complex reasoning.

I also build per-user and per-organization spending caps, usage dashboards, and alerting. You know exactly what your AI features cost, and no single user or workflow can exceed the budget you set.

The Right Model for the Job

Claude excels at long document analysis, nuanced writing, and multi-step reasoning. I wrote about using Claude Code for web development and how it fits into production workflows. GPT-4 has the broadest ecosystem and the most third-party integrations. Smaller models handle classification, extraction, and simple generation at a fraction of the cost. I choose the model based on your specific use case and implement routing logic so different tasks use different models automatically.

For applications that need SEO and content marketing alongside AI features, I partner with Frog Stone Media for search strategy. AI-powered products still need users to find them.