AI Architecture in Practice

How I build bespoke AI development environments for each project I work on. Custom skills, hooks, subagents, and MCP servers matched to the specific constraints, voice, and token budget of the codebase they live in. Six projects, six environments, zero shared code.

The discipline

Every project I work on gets a Claude Code environment built from scratch for that project. Nothing travels between them. Not a skill, not a hook, not a subagent, not an MCP server. Six projects below, six completely different environments, zero shared code.

The reusable template instinct is wrong for AI tooling. Every project has a different voice, a different dataset, a different token budget, and a different definition of what counts as wrong. A skill that works perfectly on one project is dead weight in another, or worse, a quiet liability that breaks on the edge case the original project never had to handle. The job is to figure out what this project actually needs, build it tight, and ship it inside the project’s sandbox. Then do it again next project.

The thesis in one picture

The six projects

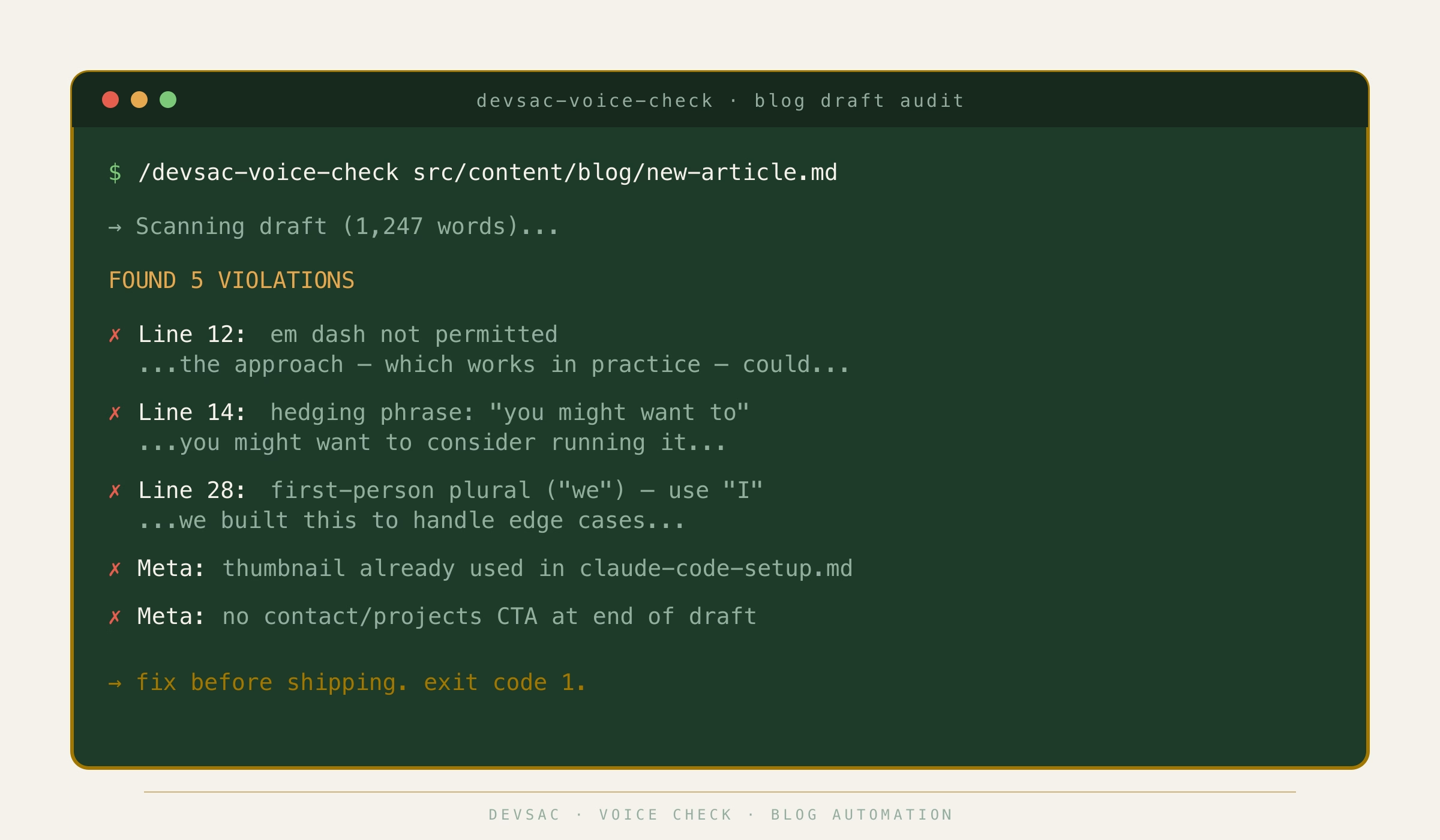

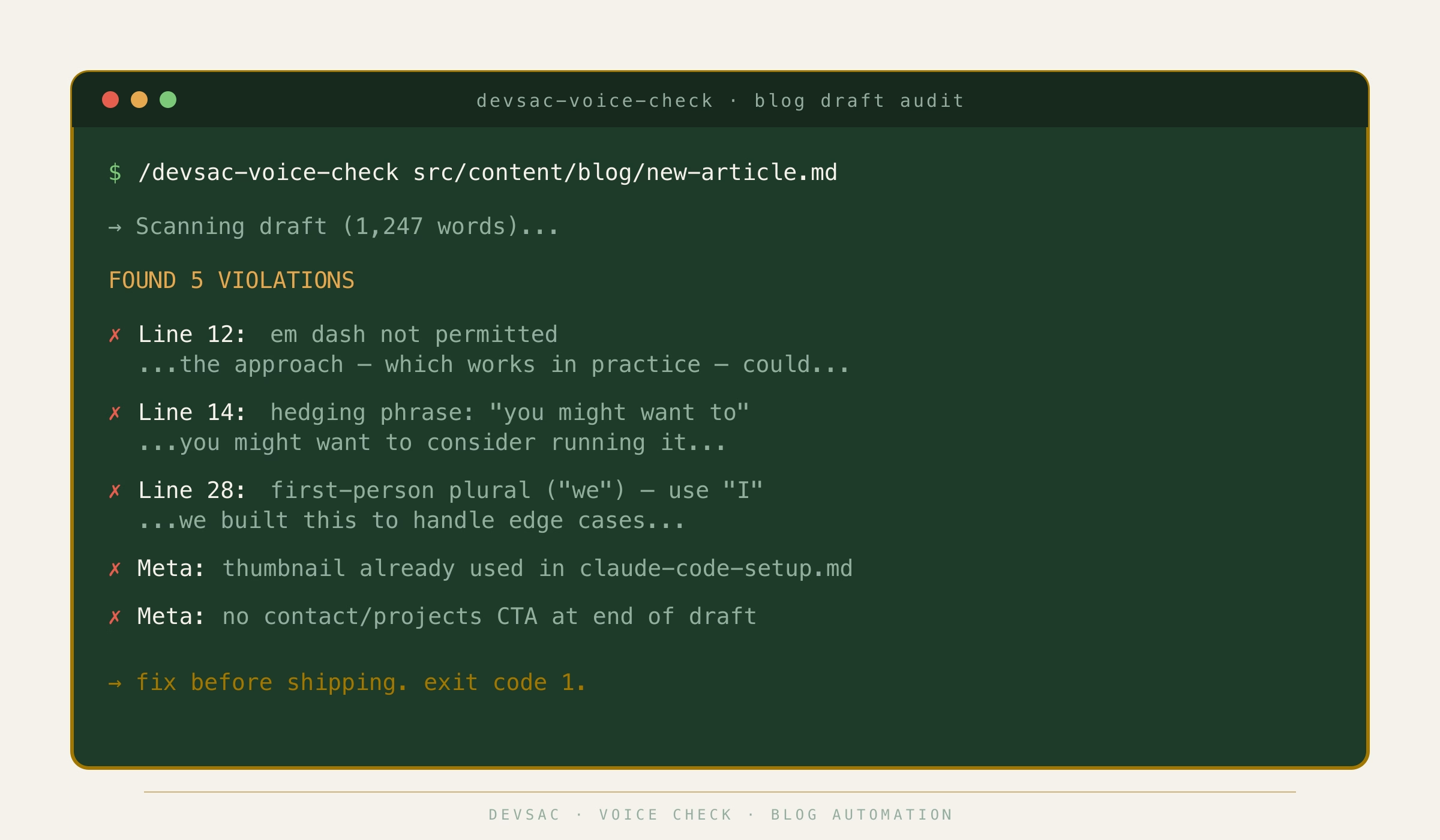

1. DevSac blog automation

The constraint. Strict voice rules. No em dashes, no hedging, no first-person plural, thumbnails that can never repeat across articles, a word count ceiling, and a CTA required at the end of every post.

The custom build. devsac-voice-check runs a pre-flight audit on every draft. devsac-image-prep enforces AVIF quality 90 and the no-reuse rule on thumbnails. devsac-social-post generates the matching Google My Business and Facebook post with a Ken Burns video from the source image. A content-voice-editor subagent restructures drafts without rephrasing them, so my writing voice survives the edit. Seven file-edit hooks enforce everything from thumbnail uniqueness to word count to root-level PNG placement before a commit ever happens.

The outcome. No draft ships without passing the voice audit. No thumbnail gets reused by accident. Social posts come out of one skill call instead of a 20-minute manual cycle.

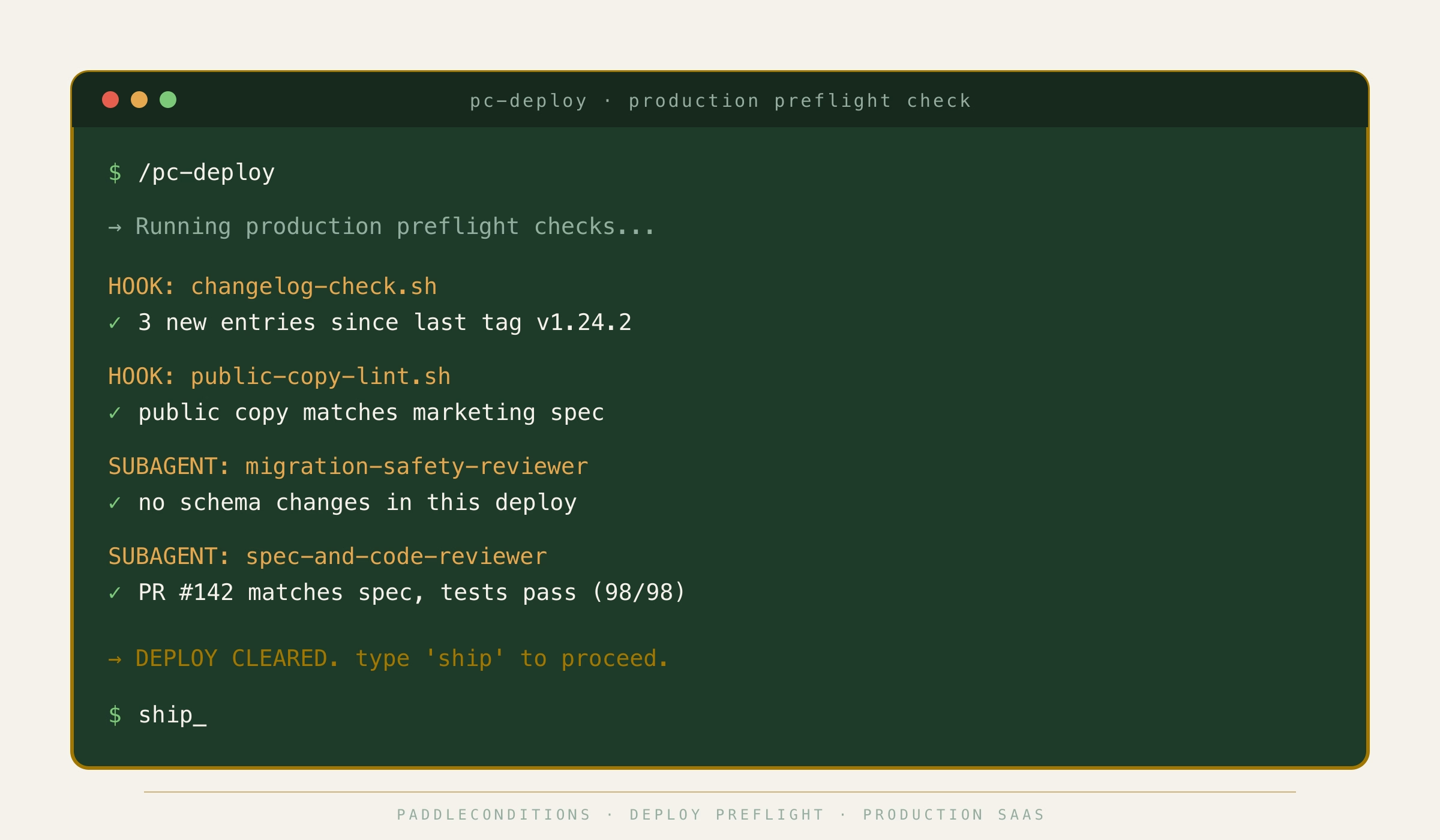

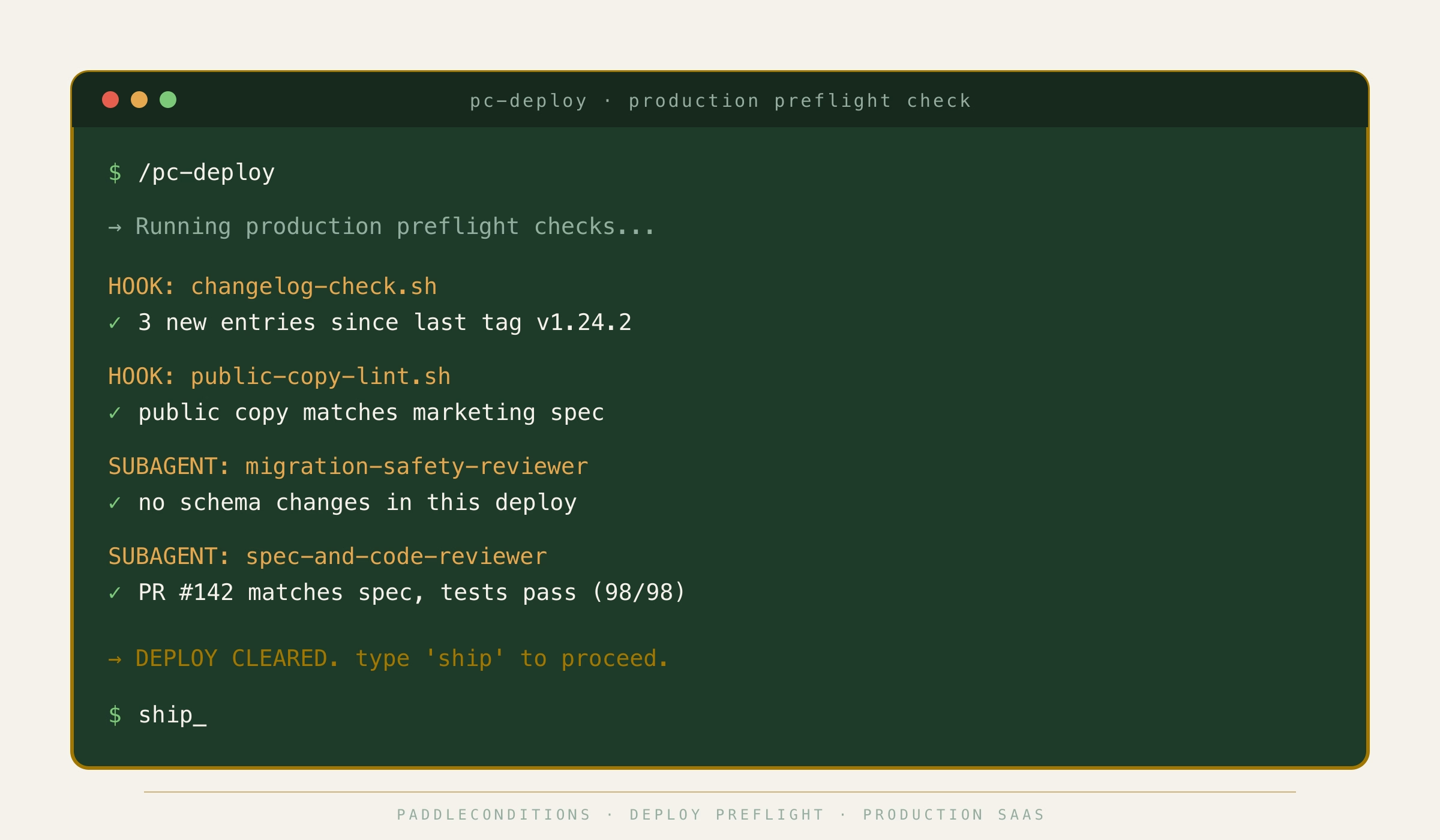

2. PaddleConditions

The constraint. A real subscription app with paying Stripe customers and Home Assistant integrations. Breakage costs money and trust. Migrations are irreversible in production. Public copy has to stay on-brand across every deploy.

The custom build. pc-deploy runs the ship flow and blocks anything that fails preflight. pc-commit enforces commit discipline before it lets anything move. log-change keeps the changelog honest. A migration-safety-reviewer subagent blocks risky schema work until it has been reviewed. A spec-and-code-reviewer subagent checks every pull request against the spec. Two hooks, changelog-check.sh and public-copy-lint.sh, guard the changelog and the marketing copy on every edit.

The outcome. Deploys get a second pair of eyes before they ship. Marketing copy cannot silently drift. The changelog stops going stale.

3. SacGroceries

The constraint. 128,000+ products, a price-evaluation pipeline that can go stale silently, and a database you really do not want to nuke. A single bad import can invalidate weeks of price history. The writing voice for merchant outreach and product descriptions is also specific to this project, and it is not the same voice any other site uses.

The custom build. import-prices, new-evaluator, and post-import-cleanup skills for the pipeline. A review-emails skill for the merchant outreach side. A project-local humanizer skill for content copy, which is a separate file from the humanizer skill that lives in BestTreesToPlant, because the two sites need different voices. Two subagents: price-data-auditor catches regressions before they hit the database, and evaluator-debugger diagnoses a broken evaluator without a full debugging session. Two hooks: changelog-guard.sh and db-rebuild-guard.sh, the second of which physically blocks accidental database rebuilds.

The outcome. Price regressions get caught by audit, not by users. The database stays safe from a bad command. Same skill name, different project, different file. That is the whole point.

4. TWD-Content

The constraint. The Weekly Driver is a Mediavine WordPress site with 243,000 monthly visitors. Ad revenue is directly tied to traffic, so nothing that regresses the site can ship. A core dataset has to stay internally consistent across every article that touches it.

The custom build. generate-wp-blocks and wp-publish skills for the HTML-to-WordPress paste flow. A spec-verifier subagent checks every draft against the content spec. Two Python hooks: one keeps the editorial tracker in sync as drafts move, and one blocks any edit that would break a key data integrity rule across the article set.

The outcome. WordPress blocks come out of one skill call. The editorial tracker stays in sync automatically. The core dataset stays internally consistent without a human checking it manually.

5. MK-Library

The constraint. MK-Library is the other Mediavine site, 226,000 monthly visitors. Same monetization tier as TWD, completely different operational shape: every article is planned from a SEMrush brief, and images need AVIF conversion before WordPress ever sees them.

The custom build. semrush-brief turns raw SEMrush data into a usable article brief. article-handoff-update and wp-push skills carry drafts into the WordPress paste flow. An article-publish-readiness subagent gates publishing until the project checklist passes. A content-tracker-sync subagent keeps the editorial tracker consistent across sessions. Two visible hooks: avif-convert.sh runs AVIF conversion automatically on every image edit, and validate-wp-blocks.sh sanity-checks WordPress block markup before push.

The outcome. Every article starts from a SEMrush-backed plan. Images are AVIF by the time WordPress sees them. Bad WordPress block markup gets caught before it is pushed. Two Mediavine sites, two totally different Claude Code environments, because the operational constraints are not the same.

6. Amazon Creators API

The constraint. Amazon’s PA-API is painful. OAuth, inconsistent JSON shapes, rate limits, throttling. Every content site I run needs product data for affiliate links, and reimplementing PA-API in each project is a waste.

The custom build. A standalone TypeScript MCP server with 7 tools that make PA-API calls feel like plain function calls inside Claude Code. AES-256 encrypted credentials, built-in rate limiting, 12 CLI commands, 3 production dependencies.

The outcome. Every content site that needs Amazon product data now talks to one MCP server. The full teardown lives at /projects/amazon-creators-api/.

What every build has in common

Token budget awareness. Every skill, subagent, and hook is designed to fit a token budget before it is designed to fit a feature list. A subagent that blows out the parent context is a liability, not a win. I plan which work gets delegated, which work stays in the main loop, and which work can run as a background hook. On SacGroceries, that meant pushing the evaluator debugger into a subagent so the main session stays small. On DevSac, it meant keeping the voice check inline so edits happen in one pass.

Sandboxing. Nothing I build for one project reaches into another. Every custom skill, hook, and MCP lives inside the project it is built for, with no assumption that anything else exists. When a client hires me to work on their codebase, their project gets its own environment from scratch, the same discipline I use on my own portfolio applied to their sandbox. Portability and no lock-in are features.

Reliability under edge cases. The hooks and subagents above exist because the edge case that breaks a project does not break it politely. A migration rolls forward halfway. An evaluator returns empty. A WordPress block renders with a missing shortcode. Every custom build assumes the edge case will happen and decides what the right failure mode is before it does.

Prompt as a unit. I treat every prompt inside a skill or subagent the same way I treat a function: inputs defined, outputs predictable, regression-testable. A prompt that works once is a prototype. A prompt that works every time, across every input the project will realistically throw at it, is production.

Cost control. AI API spend gets out of hand quietly. Every build includes explicit cost boundaries: which model is used for which step, where caching applies, what gets batched, what runs locally versus through an API call. On high-volume projects I build cost dashboards so the number is visible before it is a problem.

What this means for your project

I walk into your codebase cold, read how your team actually works, and find the places where AI would save hours or quietly cost you them. Then I build the custom skills, hooks, subagents, and MCP servers that fit your project’s constraints. Not a template. Not a rebrand of something I built for someone else. Tooling that knows your voice, your data, your deploy process, and your token budget, and gets out of your team’s way.

If you are trying to figure out whether an AI Architect makes sense for your business, here is what the work actually looks like as a service, including how I scope, what the first two weeks look like, and how I price it. Or if you already know what is eating your team’s time, tell me about it and I will tell you honestly whether it is a fit.

Like what you see?

I build tools that solve real problems. If you have an idea or a project that needs engineering, let's talk.

Get in Touch